What Is Dynamic Programming? Origin Story, Real-Life Uses, LeetCode Problems & Complete Beginner Guide

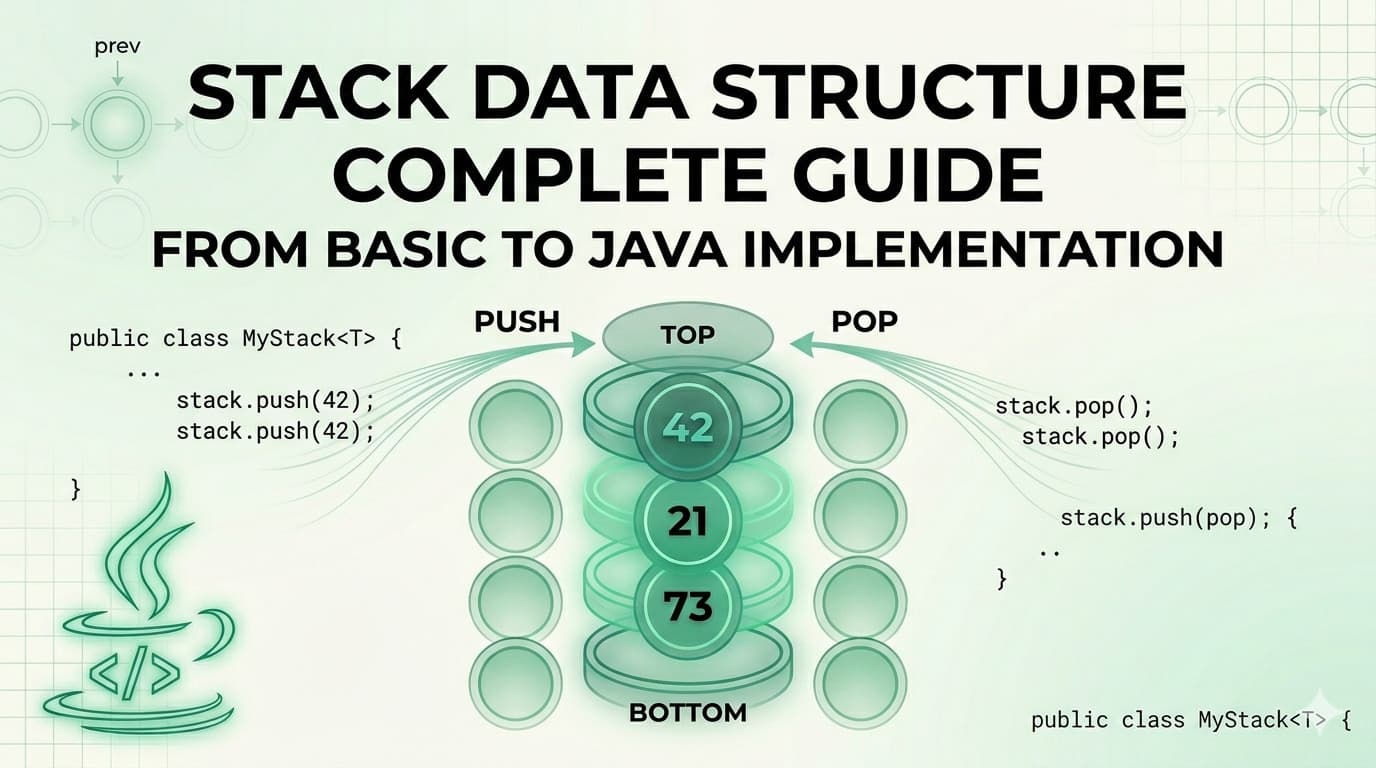

Introduction — Why Dynamic Programming Feels Hard (And Why It Isn't)If you've ever stared at a LeetCode problem, read the solution, understood every single line, and still had absolutely no idea how someone arrived at it — welcome. You've just experienced the classic Dynamic Programming (DP) confusion.DP has a reputation. People treat it like some dark art reserved for competitive programmers or Google engineers. The truth? Dynamic Programming is one of the most logical, learnable, and satisfying techniques in all of computer science. Once it clicks, it really clicks.This guide will take you from zero to genuinely confident. We'll cover where DP came from, how it works, what patterns to learn, how to recognize DP problems, real-world places it shows up, LeetCode problems to practice, time complexity analysis, and the mistakes that trip up even experienced developers.Let's go.The Origin Story — Who Invented Dynamic Programming and Why?The term "Dynamic Programming" was coined by Richard Bellman in the early 1950s while working at RAND Corporation. Here's the funny part: the name was deliberately chosen to sound impressive and vague.Bellman was doing mathematical research that his employer — the US Secretary of Defense, Charles Wilson — would have found difficult to fund if described accurately. Wilson had a well-known distaste for the word "research." So Bellman invented a name that sounded suitably grand and mathematical: Dynamic Programming.In his autobiography, Bellman wrote that he picked the word "dynamic" because it had a precise technical meaning and was also impossible to use negatively. "Programming" referred to the mathematical sense — planning and decision-making — not computer programming.The underlying idea? Break a complex problem into overlapping subproblems, solve each subproblem once, and store the result so you never solve it twice.Bellman's foundational contribution was the Bellman Equation, which underpins not just algorithms but also economics, operations research, and modern reinforcement learning.So the next time DP feels frustrating, remember — even its inventor named it specifically to confuse people. You're in good company.What Is Dynamic Programming? (Simple Definition)Dynamic Programming is an algorithmic technique used to solve problems by:Breaking them down into smaller overlapping subproblemsSolving each subproblem only onceStoring the result (memoization or tabulation)Building up the final solution from those stored resultsThe key insight is overlapping subproblems + optimal substructure.Overlapping subproblems means the same smaller problems come up again and again. Instead of solving them every time (like plain recursion does), DP solves them once and caches the answer.Optimal substructure means the optimal solution to the whole problem can be built from optimal solutions to its subproblems.If a problem has both these properties — it's a DP problem.The Two Approaches to Dynamic Programming1. Top-Down with Memoization (Recursive + Cache)You write a recursive solution exactly as you would naturally, but add a cache (usually a dictionary or array) to store results you've already computed.fib(n):if n in cache: return cache[n]if n <= 1: return ncache[n] = fib(n-1) + fib(n-2)return cache[n]This is called memoization — remember what you computed so you don't repeat yourself.Pros: Natural to write, mirrors the recursive thinking, easy to reason about. Cons: Stack overhead from recursion, risk of stack overflow on large inputs.2. Bottom-Up with Tabulation (Iterative)You figure out the order in which subproblems need to be solved, then solve them iteratively from the smallest up, filling a table.fib(n):dp = [0, 1]for i from 2 to n:dp[i] = dp[i-1] + dp[i-2]return dp[n]This is called tabulation — fill a table, cell by cell, bottom to top.Pros: No recursion overhead, usually faster in practice, easier to optimize space. Cons: Requires thinking about the order of computation upfront.🧩 Dynamic Programming Template CodeBefore diving into how to recognize DP problems, here are ready-to-use Java templates for every major DP pattern. Think of these as your reusable blueprints — every DP problem you ever solve will fit into one of these structures. Just define your state, plug in your recurrence relation, and you are good to go.Template 1 — Top-Down (Memoization)import java.util.HashMap;import java.util.Map;public class TopDownDP {Map<Integer, Integer> memo = new HashMap<>();public int solve(int n) {// Base caseif (n <= 1) return n;// Check cacheif (memo.containsKey(n)) return memo.get(n);// Recurrence relation — change this part for your problemint result = solve(n - 1) + solve(n - 2);// Store in cachememo.put(n, result);return result;}}Template 2 — Bottom-Up (Tabulation)public class BottomUpDP {public int solve(int n) {// Create DP tableint[] dp = new int[n + 1];// Base casesdp[0] = 0;dp[1] = 1;// Fill the table bottom-upfor (int i = 2; i <= n; i++) {// Recurrence relation — change this part for your problemdp[i] = dp[i - 1] + dp[i - 2];}return dp[n];}}Template 3 — Bottom-Up with Space Optimizationpublic class SpaceOptimizedDP {public int solve(int n) {// Only keep last two values instead of full tableint prev2 = 0;int prev1 = 1;for (int i = 2; i <= n; i++) {// Recurrence relation — change this part for your problemint curr = prev1 + prev2;prev2 = prev1;prev1 = curr;}return prev1;}}Template 4 — 2D DP (Two Sequences or Grid)public class TwoDimensionalDP {public int solve(String s1, String s2) {int m = s1.length();int n = s2.length();// Create 2D DP tableint[][] dp = new int[m + 1][n + 1];// Base cases — first row and columnfor (int i = 0; i <= m; i++) dp[i][0] = i;for (int j = 0; j <= n; j++) dp[0][j] = j;// Fill table cell by cellfor (int i = 1; i <= m; i++) {for (int j = 1; j <= n; j++) {// Recurrence relation — change this part for your problemif (s1.charAt(i - 1) == s2.charAt(j - 1)) {dp[i][j] = dp[i - 1][j - 1];} else {dp[i][j] = 1 + Math.min(dp[i - 1][j],Math.min(dp[i][j - 1], dp[i - 1][j - 1]));}}}return dp[m][n];}}Template 5 — Knapsack Patternpublic class KnapsackDP {public int solve(int[] weights, int[] values, int capacity) {int n = weights.length;// dp[i][w] = max value using first i items with capacity wint[][] dp = new int[n + 1][capacity + 1];for (int i = 1; i <= n; i++) {for (int w = 0; w <= capacity; w++) {// Don't take item idp[i][w] = dp[i - 1][w];// Take item i if it fitsif (weights[i - 1] <= w) {dp[i][w] = Math.max(dp[i][w],values[i - 1] + dp[i - 1][w - weights[i - 1]]);}}}return dp[n][capacity];}}💡 How to use these templates:Step 1 — Identify which pattern your problem fits into. Step 2 — Define what dp[i] or dp[i][j] means in plain English before writing any code. Step 3 — Write your recurrence relation on paper first. Step 4 — Plug it into the matching template above. Step 5 — Handle your specific base cases carefully.🎥 Visual Learning Resource — Watch This Before Moving ForwardIf you prefer learning by watching before reading, this free full-length course by freeCodeCamp is one of the best Dynamic Programming resources on the internet. Watch it alongside this guide for maximum understanding.Credit: freeCodeCamp — a free, nonprofit coding education platform.How to Recognize a Dynamic Programming ProblemAsk yourself these four questions:1. Can I define the problem in terms of smaller versions of itself? If you can write a recursive formula (recurrence relation), DP might apply.2. Do the subproblems overlap? If a naive recursive solution would recompute the same thing many times, DP is the right tool.3. Is there an optimal substructure? Is the best answer to the big problem made up of best answers to smaller problems?4. Are you looking for a count, minimum, maximum, or yes/no answer? DP problems often ask: "What is the minimum cost?", "How many ways?", "Can we achieve X?"Red flag words in problem statements: minimum, maximum, shortest, longest, count the number of ways, can we reach, is it possible, fewest steps.The Core DP Patterns You Must LearnMastering DP is really about recognizing patterns. Here are the most important ones:Pattern 1 — 1D DP (Linear) Problems where the state depends on previous elements in a single sequence. Examples: Fibonacci, Climbing Stairs, House Robber.Pattern 2 — 2D DP (Grid / Two-sequence) Problems with two dimensions of state, often grids or two strings. Examples: Longest Common Subsequence, Edit Distance, Unique Paths.Pattern 3 — Interval DP You consider all possible intervals or subarrays and build solutions from them. Examples: Matrix Chain Multiplication, Burst Balloons, Palindrome Partitioning.Pattern 4 — Knapsack DP (0/1 and Unbounded) You decide whether to include or exclude items under a capacity constraint. Examples: 0/1 Knapsack, Coin Change, Partition Equal Subset Sum.Pattern 5 — DP on Trees State is defined per node; you combine results from children. Examples: Diameter of Binary Tree, House Robber III, Maximum Path Sum.Pattern 6 — DP on Subsets / Bitmask DP State includes a bitmask representing which elements have been chosen. Examples: Travelling Salesman Problem, Shortest Superstring.Pattern 7 — DP on Strings Matching, editing, or counting arrangements within strings. Examples: Longest Palindromic Subsequence, Regular Expression Matching, Wildcard Matching.Top LeetCode Problems to Practice Dynamic Programming (With Links)Here are the essential problems, organized by difficulty and pattern. Solve them in this order.Beginner — Warm UpProblemPatternLinkClimbing Stairs1D DPhttps://leetcode.com/problems/climbing-stairs/Fibonacci Number1D DPhttps://leetcode.com/problems/fibonacci-number/House Robber1D DPhttps://leetcode.com/problems/house-robber/Min Cost Climbing Stairs1D DPhttps://leetcode.com/problems/min-cost-climbing-stairs/Best Time to Buy and Sell Stock1D DPhttps://leetcode.com/problems/best-time-to-buy-and-sell-stock/Intermediate — Core PatternsProblemPatternLinkCoin ChangeKnapsackhttps://leetcode.com/problems/coin-change/Longest Increasing Subsequence1D DPhttps://leetcode.com/problems/longest-increasing-subsequence/Longest Common Subsequence2D DPhttps://leetcode.com/problems/longest-common-subsequence/0/1 Knapsack (via Subset Sum)Knapsackhttps://leetcode.com/problems/partition-equal-subset-sum/Unique Paths2D Grid DPhttps://leetcode.com/problems/unique-paths/Jump Game1D DP / Greedyhttps://leetcode.com/problems/jump-game/Word BreakString DPhttps://leetcode.com/problems/word-break/Decode Ways1D DPhttps://leetcode.com/problems/decode-ways/Edit Distance2D String DPhttps://leetcode.com/problems/edit-distance/Triangle2D DPhttps://leetcode.com/problems/triangle/Advanced — Interview LevelProblemPatternLinkBurst BalloonsInterval DPhttps://leetcode.com/problems/burst-balloons/Regular Expression MatchingString DPhttps://leetcode.com/problems/regular-expression-matching/Wildcard MatchingString DPhttps://leetcode.com/problems/wildcard-matching/Palindrome Partitioning IIInterval DPhttps://leetcode.com/problems/palindrome-partitioning-ii/Maximum Profit in Job SchedulingDP + Binary Searchhttps://leetcode.com/problems/maximum-profit-in-job-scheduling/Distinct Subsequences2D DPhttps://leetcode.com/problems/distinct-subsequences/Cherry Pickup3D DPhttps://leetcode.com/problems/cherry-pickup/Real-World Use Cases of Dynamic ProgrammingDP is not just for coding interviews. It is deeply embedded in the technology you use every day.1. Google Maps & Navigation (Shortest Path) The routing engines behind GPS apps use DP-based algorithms like Dijkstra and Bellman-Ford to find the shortest or fastest path between two points across millions of nodes.2. Spell Checkers & Autocorrect (Edit Distance) When your phone corrects "teh" to "the," it is computing Edit Distance — a classic DP problem — between what you typed and every word in the dictionary.3. DNA Sequence Alignment (Bioinformatics) Researchers use the Needleman-Wunsch and Smith-Waterman algorithms — both DP — to align DNA and protein sequences and find similarities between species or identify mutations.4. Video Compression (MPEG, H.264) Modern video codecs use DP to determine the most efficient way to encode video frames, deciding which frames to store as full images and which to store as differences from the previous frame.5. Financial Portfolio Optimization Investment algorithms use DP to find the optimal allocation of assets under risk constraints — essentially a variant of the knapsack problem.6. Natural Language Processing (NLP) The Viterbi algorithm — used in speech recognition, part-of-speech tagging, and machine translation — is a DP algorithm. Every time Siri or Google Assistant understands your sentence, DP played a role.7. Game AI (Chess, Checkers) Game trees and minimax algorithms with memoization use DP to evaluate board positions and find the best move without recomputing already-seen positions.8. Compiler Optimization Compilers use DP to decide the optimal order of operations and instruction scheduling to generate the most efficient machine code.9. Text Justification (Word Processors) Microsoft Word and LaTeX use DP to optimally break paragraphs into lines — minimizing raggedness and maximizing visual appeal.10. Resource Scheduling in Cloud Computing AWS, Google Cloud, and Azure use DP-based scheduling to assign computational tasks to servers in the most cost-efficient way possible.Time Complexity Analysis of Common DP ProblemsUnderstanding the time complexity of DP is critical for interviews and for building scalable systems.ProblemTime ComplexitySpace ComplexityNotesFibonacci (naive recursion)O(2ⁿ)O(n)Exponential — terribleFibonacci (DP)O(n)O(1) with optimizationLinear — excellentLongest Common SubsequenceO(m × n)O(m × n)m, n = lengths of two stringsEdit DistanceO(m × n)O(m × n)Can optimize space to O(n)0/1 KnapsackO(n × W)O(n × W)n = items, W = capacityCoin ChangeO(n × amount)O(amount)Classic tabulationLongest Increasing SubsequenceO(n²) or O(n log n)O(n)Binary search version is fasterMatrix Chain MultiplicationO(n³)O(n²)Interval DPTravelling Salesman (bitmask)O(2ⁿ × n²)O(2ⁿ × n)Still exponential but manageable for small nThe general rule: DP trades time for space. You use memory to avoid recomputation. The time complexity equals the number of unique states multiplied by the work done per state.How to Learn and Master Dynamic Programming — Step by StepHere is an honest, structured path to mastery:Step 1 — Get recursion absolutely solid first. DP is memoized recursion at its core. If you cannot write clean recursive solutions confidently, DP will remain confusing. Practice at least 20 pure recursion problems first.Step 2 — Start with the classics. Fibonacci → Climbing Stairs → House Robber → Coin Change. These teach you the core pattern of defining state and transition without overwhelming you.Step 3 — Learn to define state explicitly. Before writing any code, ask: "What does dp[i] represent?" Write it in plain English. "dp[i] = the minimum cost to reach step i." This single habit separates good DP thinkers from struggling ones.Step 4 — Write the recurrence relation before coding. On paper or in a comment. Example: dp[i] = min(dp[i-1] + cost[i-1], dp[i-2] + cost[i-2]). If you can write the recurrence, the code writes itself.Step 5 — Master one pattern at a time. Don't jump between knapsack and interval DP in the same week. Spend a few days on each pattern until it feels intuitive.Step 6 — Solve the same problem both ways. Top-down and bottom-up. This builds deep understanding of what DP is actually doing.Step 7 — Optimize space after getting correctness. Many 2D DP solutions can use a single row instead of a full matrix. Learn this optimization after you understand the full solution.Step 8 — Do timed practice under interview conditions. Give yourself 35 minutes per problem. Review what you got wrong. DP is a muscle — it builds with reps.Common Mistakes in Dynamic Programming (And How to Avoid Them)Mistake 1 — Jumping to code before defining state. The most common DP error. Always define what dp[i] or dp[i][j] means before writing a single line of code.Mistake 2 — Wrong base cases. A single wrong base case corrupts every answer built on top of it. Trace through your base cases manually on a tiny example before running code.Mistake 3 — Off-by-one errors in indexing. Whether your dp array is 0-indexed or 1-indexed must be 100% consistent throughout. This causes more bugs in DP than almost anything else.Mistake 4 — Confusing top-down with bottom-up state order. In bottom-up DP, you must ensure that when you compute dp[i], all values it depends on are already filled. If you compute in the wrong order, you get garbage answers.Mistake 5 — Memoizing in the wrong dimension. In 2D problems, some people cache only one dimension when the state actually requires two. Always identify all variables that affect the outcome.Mistake 6 — Using global mutable state in recursion. If you use a shared array and don't clear it between test cases, you'll get wrong answers on subsequent inputs. Always scope your cache correctly.Mistake 7 — Not considering the full state space. In problems like Knapsack, forgetting that the state is (item index, remaining capacity) — not just item index — leads to fundamentally wrong solutions.Mistake 8 — Giving up after not recognizing the pattern immediately. DP problems don't announce themselves. The skill is learning to ask "is there overlapping subproblems here?" on every problem. This takes time. Don't mistake unfamiliarity for inability.Frequently Asked Questions About Dynamic ProgrammingQ: Is Dynamic Programming the same as recursion? Not exactly. Recursion is a technique for breaking problems into smaller pieces. DP is recursion plus memoization — or iterative tabulation. All DP can be written recursively, but not all recursion is DP.Q: What is the difference between DP and Divide and Conquer? Divide and Conquer (like Merge Sort) breaks problems into non-overlapping subproblems. DP is used when subproblems overlap — meaning the same subproblem is solved multiple times in a naive approach.Q: How do I know when NOT to use DP? If the subproblems don't overlap (no repeated computation), greedy or divide-and-conquer may be better. If the problem has no optimal substructure, DP won't give a correct answer.Q: Do I need to memorize DP solutions for interviews? No. You need to recognize patterns and be able to derive the recurrence relation. Memorizing solutions without understanding them will fail you in interviews. Focus on the thinking process.Q: How long does it take to get good at DP? Most people start to feel genuinely comfortable after solving 40–60 varied DP problems with deliberate practice. The first 10 feel impossible. The next 20 feel hard. After 50, patterns start feeling obvious.Q: What programming language is best for DP? Any language works. Python is often used for learning because its dictionaries make memoization trivial. C++ is preferred in competitive programming for its speed. For interviews, use whatever language you're most comfortable in.Q: What is space optimization in DP? Many DP problems only look back one or two rows to compute the current row. In those cases, you can replace an n×m table with just two arrays (or even one), reducing space complexity from O(n×m) to O(m). This is called space optimization or rolling array technique.Q: Can DP be applied to graph problems? Absolutely. Shortest path algorithms like Bellman-Ford are DP. Longest path in a DAG is DP. DP on trees is a rich subfield. Anywhere you have states and transitions, DP can potentially apply.Q: Is Greedy a type of Dynamic Programming? Greedy is related but distinct. Greedy makes locally optimal choices without reconsidering. DP considers all choices and picks the globally optimal one. Some DP solutions reduce to greedy when the structure allows, but they are different techniques.Q: What resources should I use to learn DP? For structured learning: Neetcode.io (organized problem list), Striver's DP Series on YouTube, and the book "Introduction to Algorithms" (CLRS) for theoretical depth. For practice: LeetCode's Dynamic Programming study plan and Codeforces for competitive DP.Final Thoughts — Dynamic Programming Is a SuperpowerDynamic Programming is genuinely one of the most powerful ideas in computer science. It shows up in your GPS, your autocorrect, your streaming video, your bank's risk models, and the AI assistants you talk to daily.The path to mastering it is not memorization. It is developing the habit of asking: can I break this into smaller problems that overlap? And then learning to define state clearly, write the recurrence, and trust the process.Start with Climbing Stairs. Write dp[i] in plain English before every problem. Solve everything twice — top-down and bottom-up. Do 50 problems with genuine reflection, not just accepted solutions.The click moment will come. And when it does, you'll wonder why it ever felt hard.