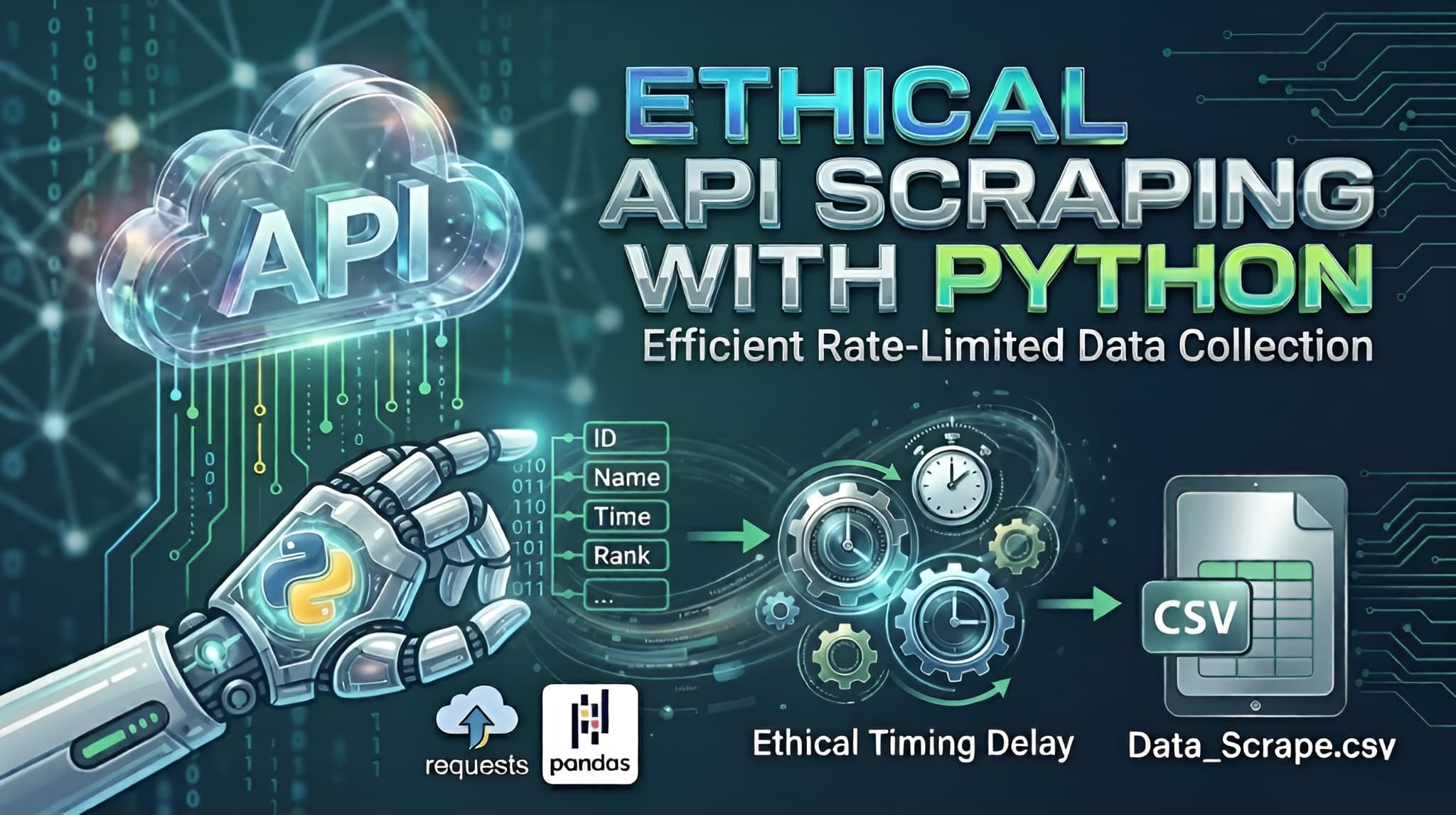

In the world of data science, the data you need isn't always available in a neat, downloadable package. Often, it sits behind an API that requires individual queries for every piece of information.

If you try to "blast" an API with thousands of requests per second, you’ll likely trigger a DDoS (Distributed Denial of Service) protection system, resulting in a blocked IP or a banned account. Today, we’ll walk through a professional Python template designed to fetch data sequentially, respect server limits, and save the results into a clean CSV file.

The Strategy: "Slow and Steady Wins the Race"

When scraping an API, we want to mimic human behavior. Our script follows three golden rules:

- Iterative Logic: Loop through a range of IDs (or "Bib numbers" in this case).

- Defensive Timing: Introduce a random delay between requests.

- Graceful Error Handling: Ensure one failed request doesn't crash the whole script.

The Python Implementation

Below is the generalized template. Notice how we use the requests library for communication and pandas for data organization.

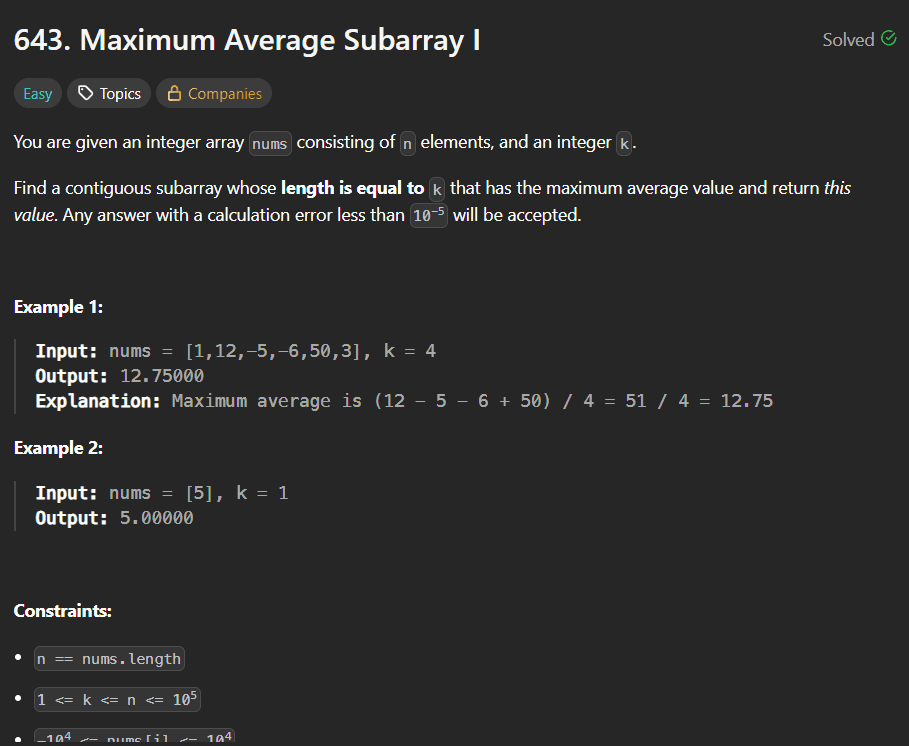

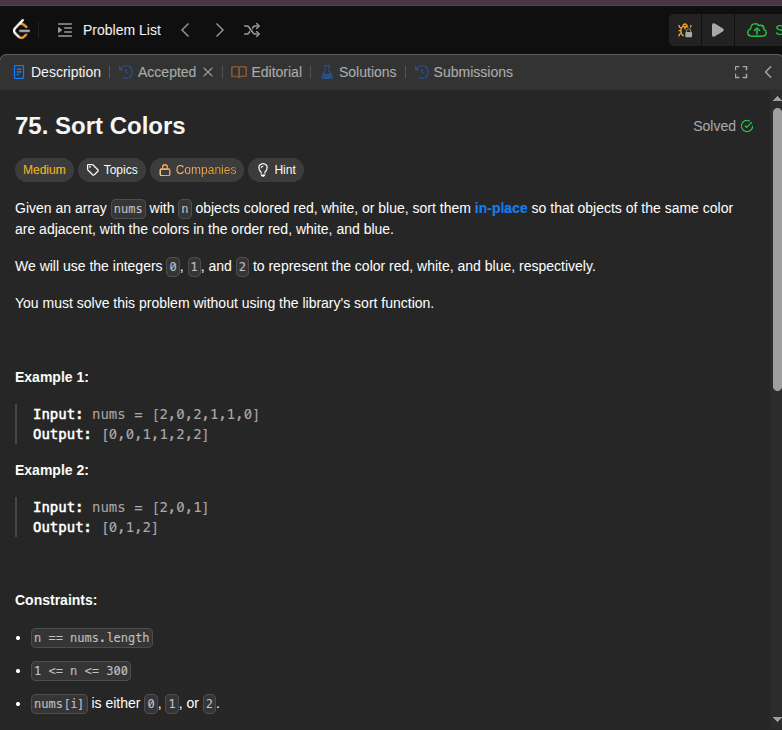

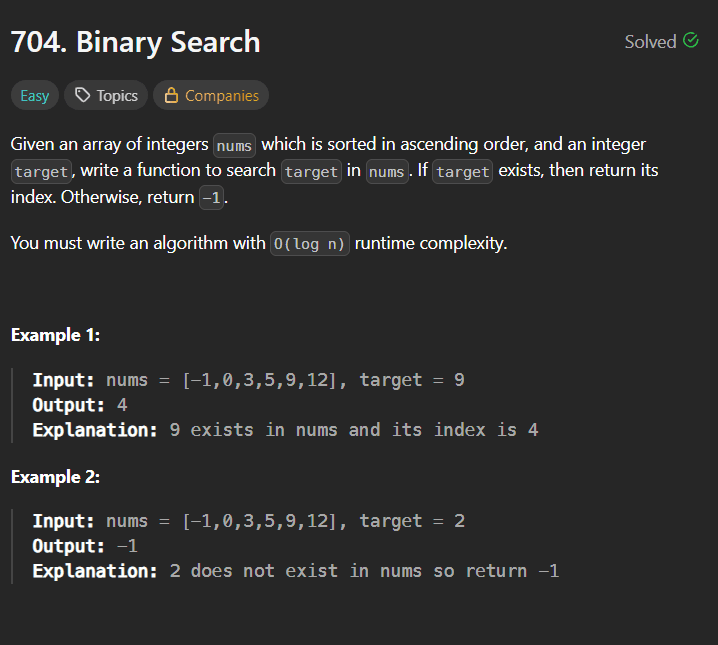

Python Code Snippet:

Deep Dive: Why This Works

1. Randomized Delays (The time.sleep Trick)

Most security systems look for "rhythmic" behavior (e.g., a request exactly every 0.5 seconds). By using random.uniform(1.0, 3.0), the interval between requests is always different. This makes your script look less like a bot and more like an organic user.

2. The Power of Headers

In the HEADERS dictionary, we include a user-agent. This tells the server what "browser" is visiting. Without this, some APIs block requests because they see them as "unidentified scripts."

3. Data Flattening with Pandas

APIs often return deeply nested JSON. By extracting only the fields we need (like name and rank) and putting them into a list of dictionaries, we make it incredibly easy for Pandas to convert that list into a structured table (CSV).

4. Safety First

The try...except block is your safety net. If your internet flickers or the server hiccups, the script won't stop; it will simply log the error and move on to the next ID.

Conclusion

Automating data collection is a superpower for any developer or analyst. By using this template, you can gather thousands of records while staying on the "good side" of the API providers. Just remember: always check a website’s robots.txt or Terms of Service before you start scraping!