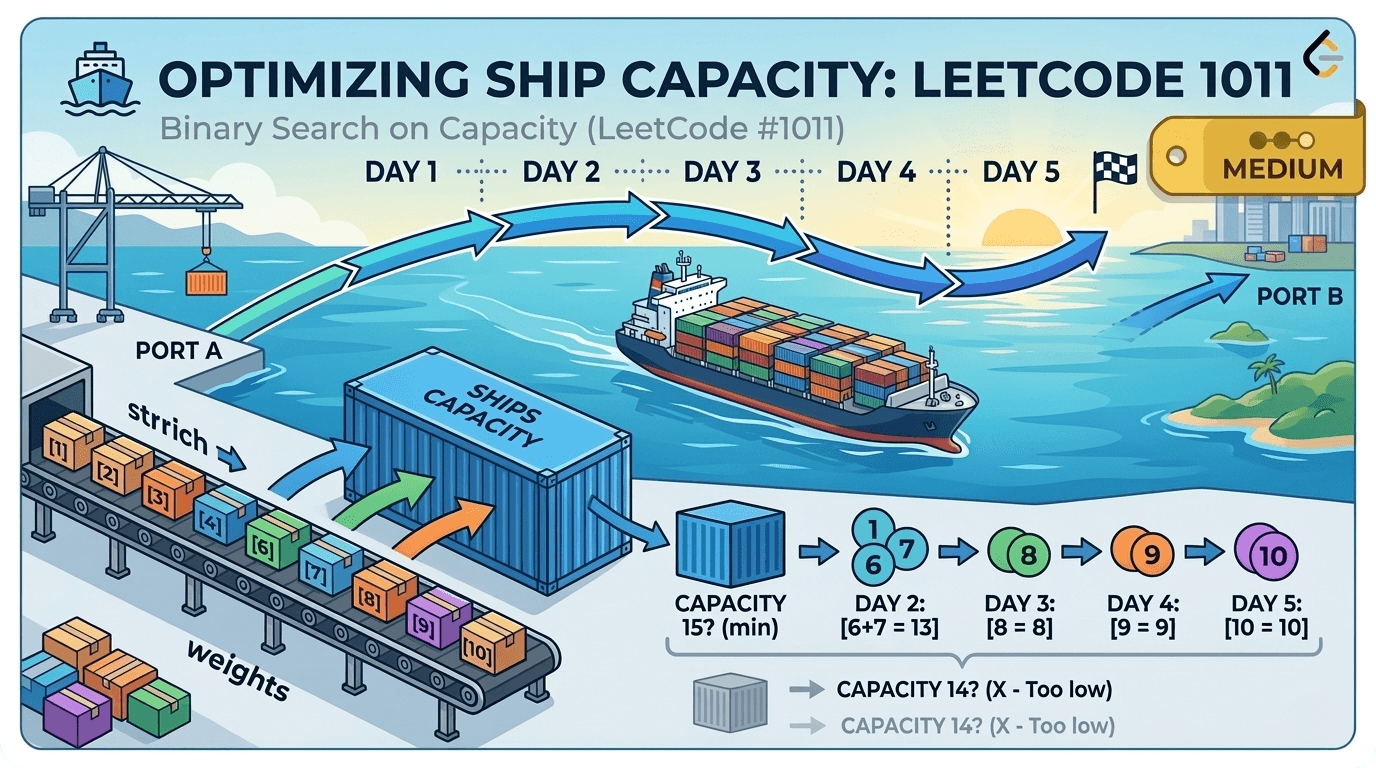

LeetCode 1011 — Capacity To Ship Packages Within D Days | Binary Search on Answer Explained

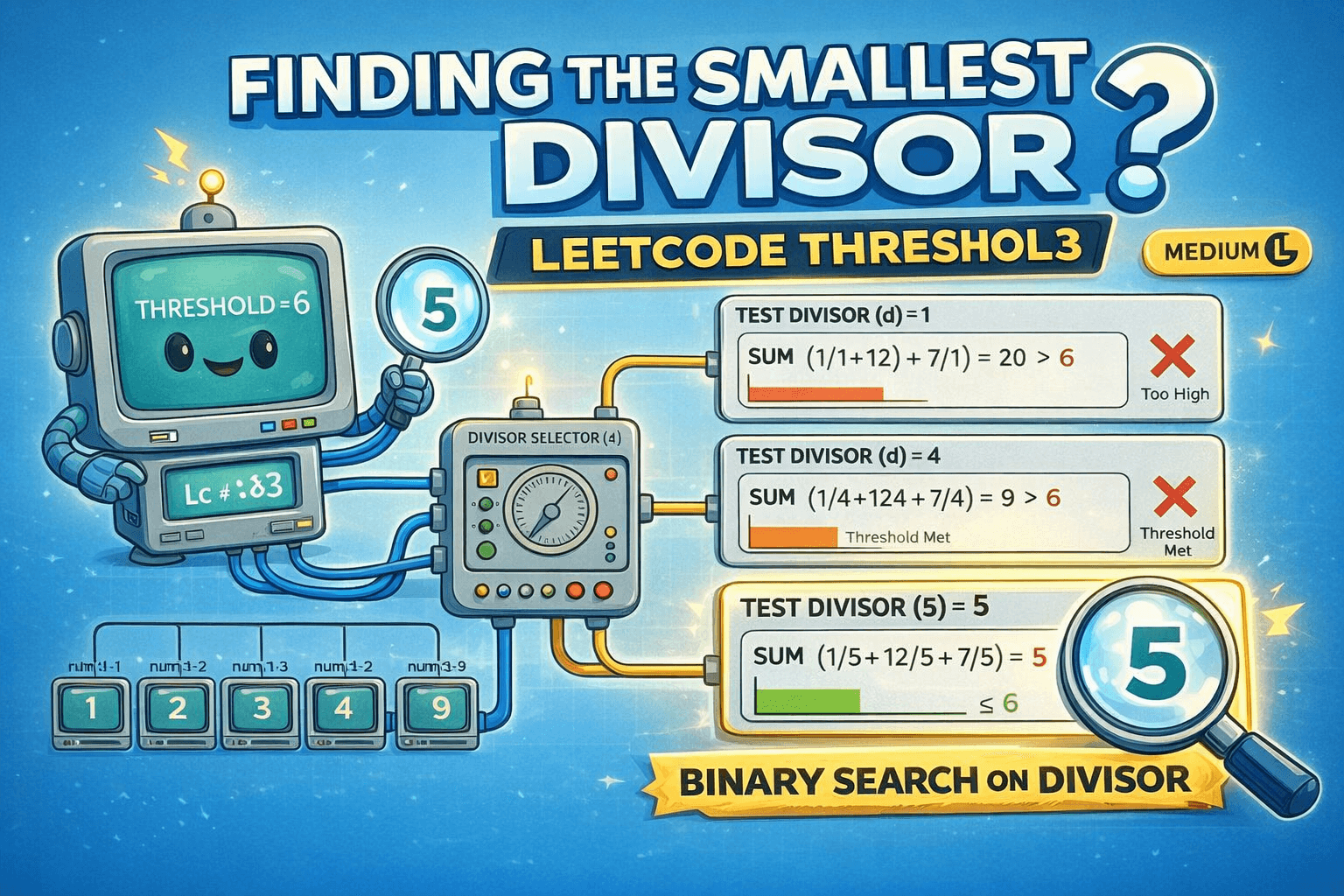

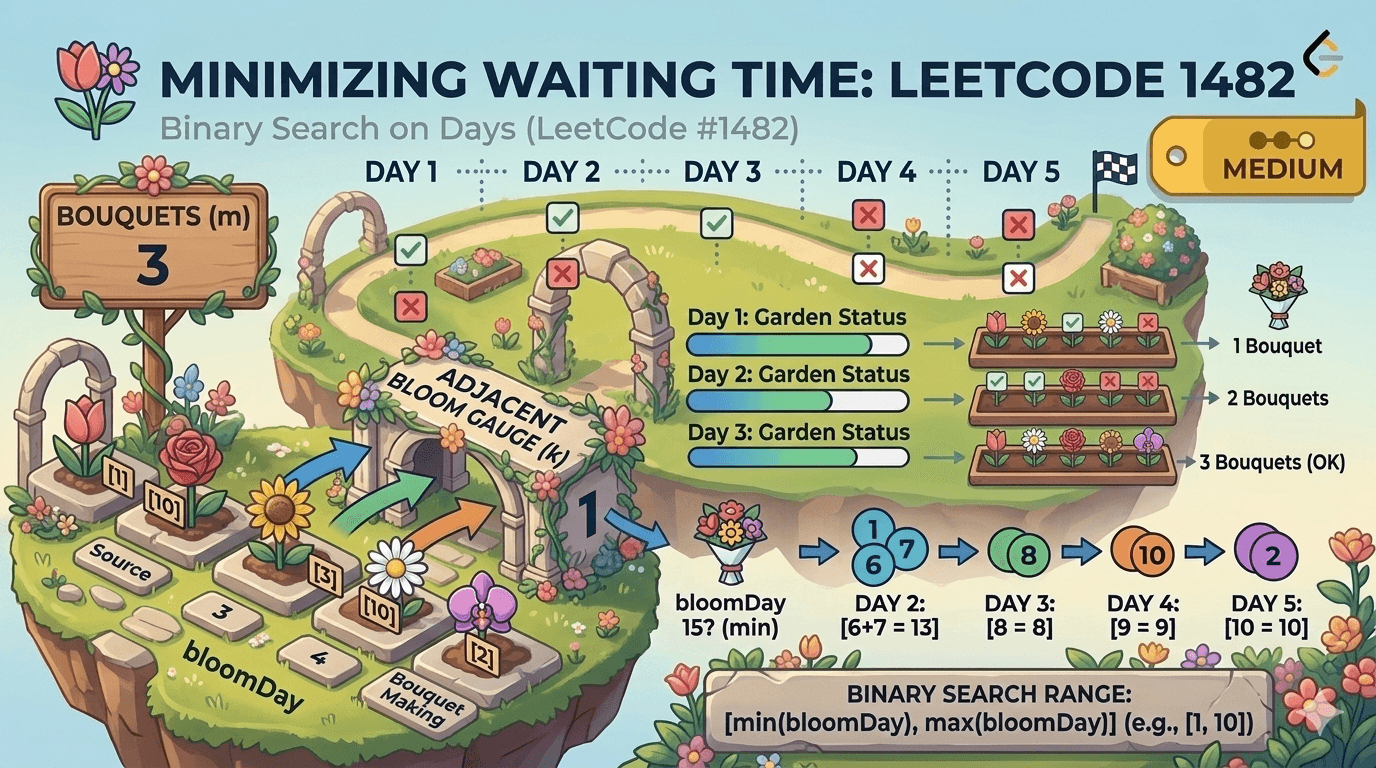

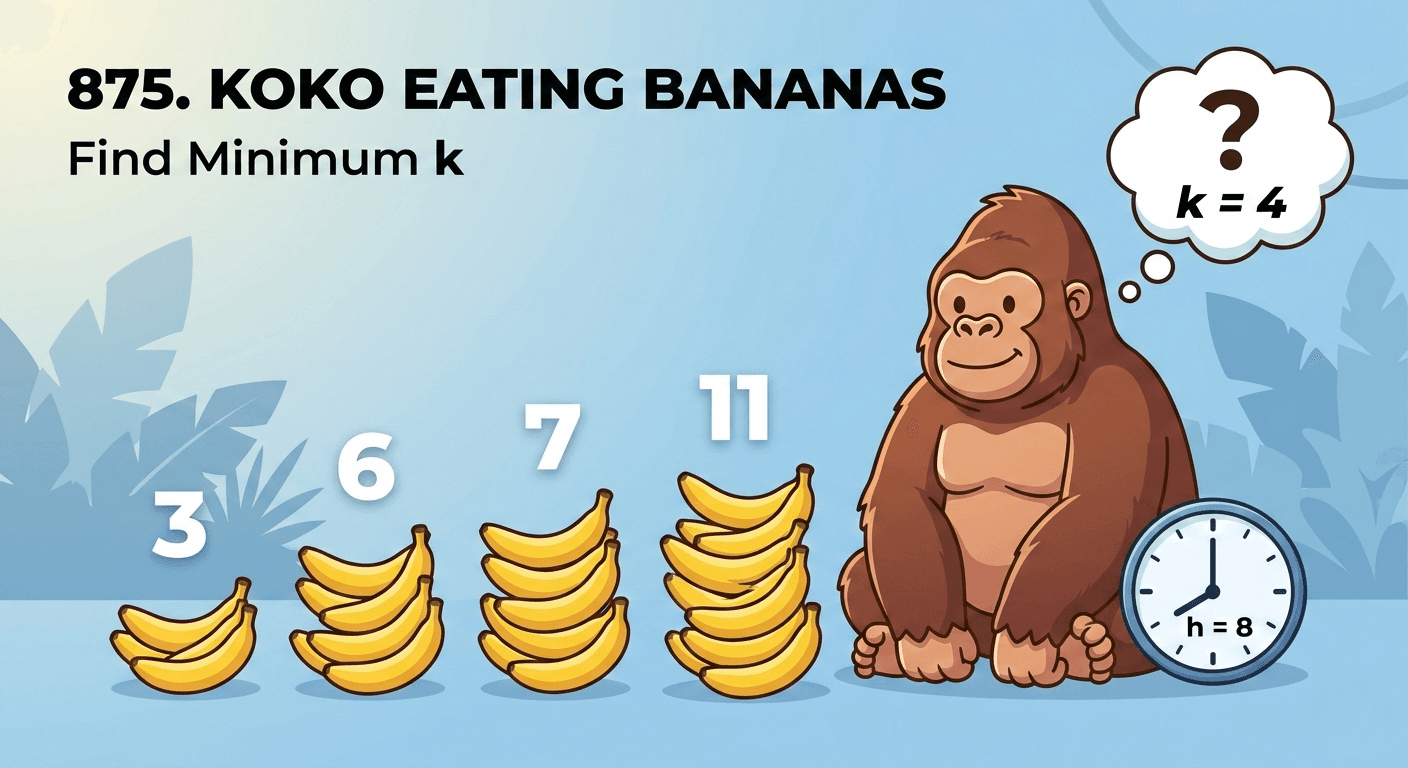

🚀 Try This Problem First!Before reading the solution, attempt it yourself on LeetCode — you'll retain the concept far better.🔗 Problem Link: https://leetcode.com/problems/capacity-to-ship-packages-within-d-days/1. Understanding the ProblemYou have a conveyor belt carrying N packages, each with a given weight. A ship must transport all of them within at most D days. Every day, you load packages in order (no rearranging allowed), and you cannot exceed the ship's weight capacity in a single day.Goal: Find the minimum weight capacity of the ship such that all packages are delivered within D days.Constraints:1 ≤ days ≤ weights.length ≤ 5 × 10⁴1 ≤ weights[i] ≤ 5002. Two Key Observations (Before Writing a Single Line of Code)Before jumping to code, anchor yourself with these two facts:Minimum possible capacity: The ship must at least be able to carry the single heaviest package. If it can't, that package can never be shipped. So:low = max(weights)Maximum possible capacity: If the ship can carry everything at once, it finishes in 1 day — always valid. So:high = sum(weights)Our answer lies somewhere in the range [max(weights), sum(weights)]. This is the classic setup for Binary Search on the Answer.3. Intuition — Why Binary Search?Ask yourself: what happens as ship capacity increases?The number of days needed decreases or stays the same. This is a monotonic relationship — and monotonicity is the green flag for Binary Search.Instead of checking every capacity from 1 to sum(weights) (which is huge), we binary search over the capacity space and for each candidate capacity mid, we ask:"Can all packages be shipped in ≤ D days with this capacity?"This feasibility check runs in O(N) using a greedy simulation, making the whole approach O(N log(sum(weights))).4. The Feasibility Check — Greedy LoadingGiven a capacity mid, simulate loading the ship greedily:Keep adding packages to today's load.The moment adding the next package would exceed mid, start a new day and reset the current load to that package.Count total days used.If days used ≤ D, capacity mid is feasible.5. Binary Search StrategyIf canShip(mid) is true → mid might be the answer, but try smaller. Set ans = mid, high = mid - 1.If canShip(mid) is false → capacity is too small, increase it. Set low = mid + 1.6. Dry Run — Example 1Input: weights = [1, 2, 3, 4, 5, 6, 7, 8, 9, 10], days = 5low = 10 (max weight), high = 55 (sum of weights)IterationlowhighmidDays NeededFeasible?ans11055322✅ Yes3221031203✅ Yes2031019146❌ No2041519175✅ Yes1751516155✅ Yes1561514—loop ends—15Output: 15 ✅7. The Code Implementationclass Solution { /** * Feasibility Check (Helper Function) * * Given a ship capacity 'mid', this function simulates loading packages * greedily and returns true if all packages can be shipped within 'days' days. * * @param mid - candidate ship capacity to test * @param arr - weights array * @param days - allowed number of days * @return true if shipping is possible within 'days' days, false otherwise */ public boolean canShip(int mid, int[] arr, int days) { int daysNeeded = 1; // We always need at least 1 day int currentLoad = 0; // Weight loaded on the ship today for (int i = 0; i < arr.length; i++) { // If adding this package exceeds today's capacity, // start a new day and carry this package on the new day if (currentLoad + arr[i] > mid) { currentLoad = arr[i]; // This package starts the new day's load daysNeeded++; // Increment day count } else { // Package fits — add it to today's load currentLoad += arr[i]; } } // If days needed is within the allowed limit, this capacity is feasible return daysNeeded <= days; } /** * Main Function — Binary Search on the Answer * * Search range: [max(weights), sum(weights)] * - low = max(weights) → ship must carry the heaviest package at minimum * - high = sum(weights) → ship carries everything in one day (upper bound) * * @param weights - array of package weights * @param days - maximum allowed days * @return minimum ship capacity to deliver all packages within 'days' days */ public int shipWithinDays(int[] weights, int days) { int high = 0; // Will become sum(weights) int low = Integer.MIN_VALUE; // Will become max(weights) int ans = 0; // Calculate the binary search bounds for (int a : weights) { high += a; // sum of all weights → upper bound low = Math.max(low, a); // max single weight → lower bound } // Binary Search over the capacity space while (low <= high) { int mid = low + (high - low) / 2; // Avoids integer overflow if (canShip(mid, weights, days)) { // mid works — record it as a potential answer // and try to find a smaller valid capacity ans = mid; high = mid - 1; } else { // mid is too small — increase the capacity low = mid + 1; } } return ans; // Minimum feasible capacity }}8. Code Walkthrough — Step by StepStep 1 — Setting bounds: We iterate through the weights array once to compute low = max(weights) and high = sum(weights). These are our binary search boundaries.Step 2 — Binary Search loop: We pick mid = low + (high - low) / 2 (safe overflow-free midpoint). We check if capacity mid can ship all packages in ≤ D days.Step 3 — Feasibility helper (canShip): We simulate a greedy day-by-day loading. We start with daysNeeded = 1 and currentLoad = 0. For each package, if it fits in today's load, we add it. If not, we start a new day. The key line is:if (currentLoad + arr[i] > mid) { currentLoad = arr[i]; // new day starts with this package daysNeeded++;}Step 4 — Narrowing the search: If feasible → ans = mid, high = mid - 1 (try smaller). If not feasible → low = mid + 1 (try larger).9. Common Mistakes to AvoidMistake 1 — Wrong lower bound: Using low = 1 instead of low = max(weights) works but is far slower since you binary search over a much larger range unnecessarily.Mistake 2 — Wrong condition in canShip: The return should be daysNeeded <= days (not < days). If days needed equals D, it's still valid.Mistake 3 — Off-by-one in greedy loading: When a package doesn't fit, you start a new day with that package as the first item: currentLoad = arr[i]. Do NOT set currentLoad = 0 — that package must still be accounted for.Mistake 4 — Integer overflow in midpoint: Always use mid = low + (high - low) / 2 instead of (low + high) / 2 to avoid overflow when low and high are large.10. Complexity AnalysisTime Complexity: O(N × log(sum(weights)))Binary search runs over the range [max(weights), sum(weights)], which has at most sum(weights) ≈ 500 × 50000 = 25,000,000 values → about log₂(25,000,000) ≈ 25 iterations.Each iteration calls canShip which is O(N).Total: O(N log S) where S = sum(weights).Space Complexity: O(1)No extra data structures. Only a handful of integer variables are used.11. Similar Problems (Same Pattern — Binary Search on Answer)Once you understand this pattern, the following problems become very similar:LeetCode 410 — Split Array Largest SumLeetCode 875 — Koko Eating Bananas [ Blog is also avaliable on this - Read Now ]LeetCode 1283 — Find the Smallest Divisor Given a ThresholdLeetCode 2064 — Minimized Maximum of Products Distributed to Any StoreAll of these follow the same template: define a feasibility check, identify a monotonic answer space, and binary search over it.12. Key Takeaways✅ When you see "find the minimum/maximum value such that a condition holds" — think Binary Search on the Answer.✅ The lower bound of the search space is the most constrained valid value (max weight here).✅ The upper bound is the least constrained valid value (total weight here).✅ The feasibility check must be O(N) or better to keep the overall complexity efficient.✅ Greedy loading (pack as much as possible each day) is optimal here since packages must go in order.Happy Coding! If this helped you, share it with a friend who's grinding LeetCode. 🚀